بينما انشغلت شركات مثل OpenAI وMidjourney ببناء أدوات ذكاء اصطناعي تعمل في الفضاء الرقمي، مثل المحادثات النصية وتوليد الصور، برزت شركة ناشئة تحمل اسم Covariant، أسسها 3 باحثين سابقين في OpenAI، لتتخذ مساراً مختلفاً: إدخال الذكاء الاصطناعي إلى العالم الفيزيائي.

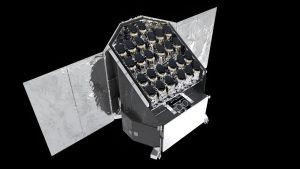

تقع الشركة في إيميريفيل بكاليفورنيا، وتركّز على تطوير تقنيات تمكّن الروبوتات من التقاط الأشياء ونقلها وفرزها داخل المستودعات ومراكز التوزيع.

الهدف الأساسي هو منح الروبوتات قدرة على استيعاب ما يحدث حولها واتخاذ القرارات المناسبة في اللحظة عينها. وإلى جانب ذلك، تسعى الشركة إلى تزويد الروبوتات بفهم واسع للغة الإنكليزية، فيمكن التفاعل معها وكأنّها نسخة مادية من ChatGPT.

على رغم من أنّ التقنية لا تزال في طور التطوير ولم تصل إلى الكمال، إلّا أنّها تمثل مؤشراً واضحاً على أنّ الأنظمة التي تدير المحادثات النصّية وتوليد الصور ستصبح قريباً أساساً لتشغيل الآلات في المصانع، المستودعات، وحتى على الطرقات وفي المنازل.

من البيانات الرقمية إلى الذكاء العملي

يعتمد هذا النهج على نفس الأساسيات التي بُنيت عليها chatbots: التعلّم من كميات هائلة من البيانات. فكما أنّ ChatGPT اكتسب قدرته على الكتابة والتحليل عبر دراسة نصوص من مختلف أرجاء الإنترنت، يمكن للروبوتات أن تتحسن كلما زُوّدت بمزيد من البيانات المرئية والحسية.

لا تصنع شركة Covariant، التي جمعت تمويلاً بقيمة 222 مليون دولار، الروبوتات بحدّ ذاتها، بل تُركّز على تطوير البرمجيات التي تحرّكها. وتسعى إلى نشر هذه التكنولوجيا أولاً في المستودعات، قبل أن تنتقل لاحقاً إلى مصانع الإنتاج وربما حتى إلى السيارات ذاتية القيادة.

تُعرف الأنظمة التي تقف وراء هذه التقنية باسم الشبكات العصبية، والمستوحاة من عمل الخلايا العصبية في الدماغ. ومن خلال تحديد الأنماط في كم هائل من البيانات، تستطيع هذه الشبكات التعرّف على الكلمات والأصوات والصور، بل وتوليدها أيضاً. وهو المبدأ عينه الذي مكّن OpenAI من بناء ChatGPT.

أمّا الخطوة الأحدث في هذا المسار فهي دمج أنواع متعدّدة من البيانات. فبدراسة الصور والنصوص المرافقة لها مثلاً، يتعلّم النظام العلاقة بين الشكل والوصف، ليدرك مثلاً أنّ كلمة “موزة” تشير إلى فاكهة صفراء منحنية. على هذا النهج بُنيت أداة Sora من OpenAI، القادرة على توليد مقاطع فيديو استناداً إلى وصف نصي قصير.

إعتمد مؤسسو Covariant – وهم البروفيسور بيتر أبيل من جامعة كاليفورنيا بيركلي، وطلابه السابقون بيتر تشين وروكي دوان وتيانهاو زانغ – على الأسلوب عينه لتدريب أنظمة قادرة على تشغيل روبوتات الفرز في المستودعات حول العالم.

وجمعت الشركة لسنوات بيانات ضخمة من الكاميرات وأجهزة الاستشعار لتوثيق كيفية عمل الروبوتات. وبدمج هذه البيانات مع النصوص الهائلة التي استُخدمت لتدريب ChatGPT، تمكّنت من بناء نظام يُتيح للروبوتات فهماً أوسع للعالم المحيط بها.

نتيجة ذلك، أصبح الروبوت قادراً على التعامل مع مواقف غير متوقعة. فهو يعرف كيف يلتقط موزة حتى وإن لم يسبق له أن صادفها. ويمكنه أيضاً فهم التعليمات اللغوية: فإذا طلب منه أحدهم “التقاط موزة” سيُنفّذ الأمر، وإذا قيل له “التقاط ثمرة صفراء” فسيفهم المقصود أيضاً.

بل إنّ النظام قادر على توليد مقاطع فيديو تخيلية تتنبّأ بما قد يحدث أثناء محاولته تنفيذ مهمّة ما، مثل التقاط موزة. وعلى رغم من أنّ هذه الفيديوهات ليست ذات قيمة عملية مباشرة في المستودع، فإنّها تعكس مدى فهم الروبوت لما يجري حوله.

التحدّيات وحدود الاستخدام

التقنية الجديدة، التي أُطلق عليها اسم النموذج التأسيسي للروبوتات (RFM)، لا تخلو من الأخطاء، تماماً كما يحدث مع chatbots. فهي قد تسقط الأجسام أحياناً، أو تسيء فهم التعليمات.

يرى البروفيسور غاري ماركوس، الباحث في الذكاء الاصطناعي وأستاذ علم النفس وعلوم الأعصاب الفخري في جامعة نيويورك، أنّ التقنية واعدة في البيئات التي يُسمح فيها بحدّ معيّن من الأخطاء مثل المستودعات. لكنّه حذّر من صعوبة نشرها في مصانع الإنتاج أو في مواقف قد تُشكّل خطراً: “الأمر يعتمد على تكلفة الخطأ. فإذا كان الروبوت يزن 150 رطلاً ويقوم بحركة خاطئة، قد تكون العواقب باهظة”.

مع ذلك، يتوقع الباحثون أن تتحسن هذه الأنظمة بسرعة مع إدخال المزيد من البيانات المتنوّعة إليها، وهو ما يُميِّزها عن الروبوتات التقليدية التي كان يُبرمج كل منها لأداء حركة محدّدة ومكرّرة، مثل تثبيت برغي في مكان معيّن أو رفع صندوق بحجم واحد. تلك الروبوتات كانت عاجزة عن التعامل مع المواقف العشوائية أو غير المتوقعة.

اليوم، ومع التعلّم من أمثلة رقمية تحاكي العالم الواقعي، بات بإمكان الروبوتات التعامل مع المجهول، والاستجابة للتعليمات النصية أو الصوتية، تماماً كما تفعل برامج المحادثة. وهذا يعني أنّ الروبوتات، شأنها شأن أنظمة توليد الصور والنصوص، ستُصبح أكثر مرونة وسرعة تكيّف.

كما لخّص الدكتور تشين: “ما هو موجود في البيانات الرقمية يمكن نقله إلى العالم الحقيقي”.

Recommended for you

طالب الرفاعى يؤرخ لتراث الفن الكويتى فى "دوخى.. تقاسيم الصَبا"

مدينة المعارض تنجز نحو 80% من استعداداتها لانطلاق معرض دمشق الدولي

الجغبير: القطاع الصناعي يقود النمو الاقتصادي

تقديم طلبات القبول الموحد الثلاثاء و640 طالبا سيتم قبولهم في الطب

البريد المصري: لدينا أكثر من 10 ملايين عميل في حساب التوفير.. ونوفر عوائد يومية وشهرية وسنوية

سمو الشيخ عيسى بن سلمان بن حمد آل خليفة يستقبل سفير الولايات المتحدة الأمريكية لدى مملكة البحرين